Most security systems are designed to help you respond faster. Fewer are designed to help you intervene before a trigger is pulled.

That gap is what separates video-based weapon detection from acoustic gunshot detection. Acoustic systems activate after a shot is fired. They tell you where it happened and get responders moving within seconds.

Video-based AI detection works earlier. It identifies a visible, brandished firearm the moment it appears on camera, before anyone is hurt, before lockdown is triggered too late, before the situation becomes a recovery operation instead of a prevention.

The difference isn't a minor technical distinction. It's a fundamentally different philosophy about where security should intervene on the threat timeline.

Both technologies are deployed in real environments today. Both have legitimate use cases. But for facilities where the priority is stopping a threat before it becomes an incident, understanding how each approach works, where each falls short, and what each actually costs is the foundation of a sound decision.

TL;DR

- Acoustic gunshot detection activates after a shot is fired. It's a response tool, not a prevention tool.

- Video-based AI weapon detection identifies a visible firearm before discharge, giving security teams an actionable window to intervene.

- Acoustic detection performs best in outdoor, urban environments. It was not designed for indoor facility security.

- Layering both technologies can make sense for large outdoor campuses, but video-based detection should be the foundation for any indoor environment.

- For schools, hospitals, campuses, and public venues, the right question isn't which system responds faster. It's which system acts before a shot is ever fired.

How Acoustic Gunshot Detection Works

Acoustic gunshot detection uses a network of microphones and sensors mounted on buildings, streetlights, and utility poles to monitor ambient sound across a coverage area.

When the system picks up a loud, impulsive noise consistent with gunfire, it triangulates the location using the time difference between each sensor's reading.

That raw detection is only step one. The full process runs three stages:

- Algorithmic filtering: Machine learning models analyze the audio waveform and screen out car backfires, fireworks, and construction noise.

- Human review: A trained acoustic specialist at a 24/7 Incident Review Center confirms whether the sound is gunfire and adds context such as shot count or weapon type.

- Alert dispatch: A confirmed alert reaches law enforcement within 60 seconds of the shot being fired.

SoundThinking reports a 97% aggregate accuracy rate from 2019 to 2021, independently audited by Edgeworth Economics.

Acoustic detection was built for outdoor, urban environments where gunfire frequently goes unreported. According to SoundThinking, over 80% of gunfire incidents never generate a 911 call. It is deployed in more than 180 cities and used almost exclusively by law enforcement, instead of private facility operators.

The system is triggered by a fired shot. That's not a flaw. It's the design. Acoustic detection was built to ensure law enforcement knows about every shooting faster than the 911 system allows. Whether that capability belongs in a school, hospital, or private campus security program is a different question entirely.

Best for: Municipal law enforcement managing outdoor gun violence across large urban areas where 911 reporting is unreliable.

Where Acoustic Detection Falls Short?

Acoustic gunshot detection does what it promises. The limitations aren't about technical failure. They're about what the technology was never designed to do.

It only activates after a shot is fired

By the time an acoustic alert reaches your security team, a weapon has already been discharged. In a school hallway or hospital corridor, the first shot isn't a warning signal.

For facilities where the goal is intervention before violence occurs, a post-discharge alert isn't early warning. It's confirmation that prevention has already failed.

It was built for outdoor urban environments

ShotSpotter's sensor infrastructure is designed for open, outdoor coverage areas. Sound behaves differently inside buildings, reflecting off walls and traveling unpredictably across floors.

Most schools, hospitals, and commercial campuses need indoor coverage precisely where acoustic detection is least effective.

The human review step adds latency

A mandatory human review before dispatch is what keeps the false positive rate low.

But it also means your team isn't notified until that review completes. In environments where seconds determine outcomes, a sub-60-second alert window still represents time spent after a shot has already been fired.

Cost and infrastructure are significant

Coverage typically runs $65,000 to $90,000 per square mile annually. That pricing reflects a citywide infrastructure investment, not a per-facility security tool.

For a single school or campus, the economics rarely work without grant funding.

It doesn't integrate naturally with facility security systems

ShotSpotter was built to notify law enforcement dispatch.

Triggering lockdowns, notifying staff, or feeding into a unified security dashboard typically requires additional configuration and third-party integration work that facility operators have to manage independently.

How Video-Based AI Weapon Detection Works?

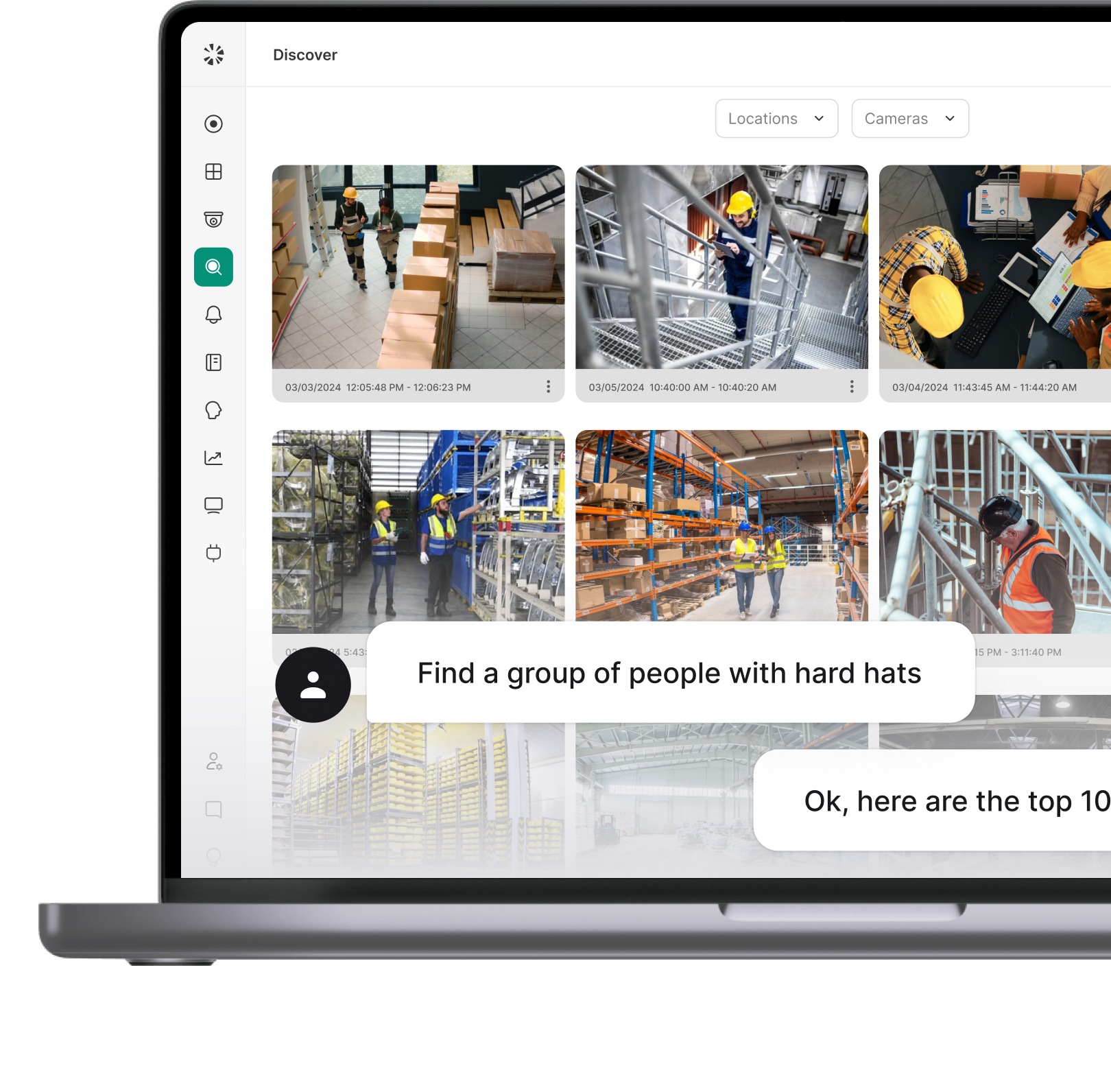

Video-based AI weapon detection runs continuously on your existing camera infrastructure.

Every frame of video is analyzed in real time by computer vision models trained to recognize visible, brandished firearms, including handguns and long rifles, across varying angles, lighting conditions, and environments.

The process moves through three stages, fast enough that the entire sequence completes in seconds:

Stage 1: Edge detection: The AI model runs locally on an on-site device, analyzing live video without sending raw footage to the cloud. When a potential firearm is identified in a frame, that data is flagged for further processing. This edge-first approach keeps bandwidth low and response times fast.

Stage 2: Cloud verification: The flagged detection is sent to the cloud, where additional AI models cross-reference it against known firearm characteristics. This secondary layer is what keeps false positive rates low without requiring a human reviewer in the loop.

Stage 3: Alert dispatch: Once confirmed, an alert is sent to your security team within seconds, including the camera feed, location, and a visual of the detection. Alert sequences are configurable. They can notify on-site staff, trigger lockdown protocols, or route directly to emergency services depending on how the system is set up.

No shot has to be fired. The alert window opens the moment a weapon becomes visible on camera.

Video-Based AI Weapon Detection is best for: Schools, hospitals, commercial campuses, and public venues that need to identify a threat before it escalates, using camera infrastructure they already have in place.

Why It Represents a Fundamentally Different Approach

The difference between these two technologies isn't incremental. It's structural.

Acoustic detection is built around an event. Something has to happen before the system can respond. Video-based AI detection is built around presence. The threat doesn't have to escalate for the system to act.

That shift moves security from a reactive posture to a preventive one. Consider what that means in practice:

Beyond timing, video-based detection integrates naturally with how facility security already works. Alerts include live video context, so responders know what they're walking into before they arrive.

The same camera infrastructure that handles surveillance, access control events, and incident review also powers the detection layer. There's no separate sensor network to maintain, no municipal coordination required, and no dependency on law enforcement infrastructure.

For security directors managing a campus, a school district, or a healthcare facility, that operational simplicity matters as much as the detection capability itself.

Side-by-Side Comparison: Video-Based Weapon Detection vs. Acoustic Gunshot Detection

The table makes one thing plain: these are not competing versions of the same tool.

Acoustic detection was engineered for a specific problem in a specific environment, outdoor urban gunfire that goes unreported, and it solves that problem well. Video-based AI detection was built for a different problem entirely, identifying a weapon inside a controlled facility environment before anyone is harmed.

The row that matters most for facility security buyers is the last one. Acoustic detection cannot alert you to a threat that hasn't yet produced a sound. Video-based detection can. For environments where the cost of a single missed intervention is catastrophic, that difference in trigger point is the deciding factor, it’s not alert speed, accuracy rates, or pricing.

Can They Work Together? The Layered Defense Argument

The honest answer is yes, with an important caveat about where that combination actually makes sense.

For large outdoor campuses, university grounds, or public venues with significant exterior space, layering acoustic detection on top of video-based AI creates a more complete coverage picture. Video handles interior spaces and visible threats. Acoustic sensors cover exterior blind spots where cameras may not reach. That layered approach has real merit in specific environments.

It doesn't, however, change the fundamental sequencing problem. Adding ShotSpotter to a facility that already has video-based weapon detection doesn't give you earlier warning. It gives you backup confirmation after a shot has already been fired. Meaningful for outdoor coverage gaps. It’s not a prevention capability.

For most K-12 schools, community hospitals, and single-building campuses, the case for layering is weaker. These environments are predominantly indoor, camera infrastructure is already in place, and budget constraints make dual-system investment hard to justify.

Where layering makes the most sense:

- Large university or corporate campuses with significant outdoor footprint

- Public venues with open plazas, parking areas, or exterior gathering spaces

- Facilities within a municipally funded ShotSpotter coverage zone, where the acoustic layer comes at no added cost

- High-security environments where redundancy is a deliberate design requirement

The takeaway isn't that one system makes the other unnecessary. Video-based detection solves the indoor, pre-discharge problem that acoustic detection was never designed to address. Any layering decision should start from that baseline.

How to Evaluate a Video-Based Weapon Detection System

Video-based weapon detection systems are built in different way. AI architecture, alert delivery, and system integration vary significantly across vendors.

These are the criteria that separate a system that genuinely reduces risk from one that adds a dashboard without changing outcomes.

1. Detection accuracy and false positive rate

A system that generates frequent false alarms will be ignored. Security teams in high-traffic environments quickly learn to tune out alerts that don't lead to real threats. Ask vendors how false positives are mitigated and request data that supports their accuracy claims rather than accepting marketing figures at face value.

2. Alert speed and delivery

Speed matters, but so does what the alert contains. A location coordinate gives responders less to work with than a live camera feed and a visual of the detected weapon. Coram sends alerts within 5 seconds of detection, including the live video feed, camera location, and detection image.

3. Camera compatibility

The strongest systems work with existing IP cameras via standard protocols, eliminating hardware replacement costs that rarely appear in initial vendor quotes. Confirm compatibility before shortlisting any vendor.

4. On-site vs. cloud processing architecture

Edge-based processing keeps response times fast and ensures the system keeps running during network outages. Cloud-only architectures introduce latency and a single point of failure if connectivity drops during an incident.

5. Integration with emergency response workflows

An alert that notifies one security guard is a different capability than one that triggers a building lockdown, notifies all staff, and routes to 911 simultaneously. Evaluate how the system connects to your access control, emergency management, and communications infrastructure.

6. Deployment complexity

Systems that deploy against existing camera infrastructure and go live quickly reduce both cost and risk. Ask vendors for realistic time-to-live estimates based on comparable deployments.

For superintendents, school board members, and facilities committees, the practical question is simple: does this system give staff a chance to act before someone is hurt, or does it confirm faster that someone already has been? That answer should anchor every budget conversation.

The Best Defense Starts Before the First Shot

Acoustic gunshot detection is a proven, valuable tool. For law enforcement managing outdoor gun violence across large urban areas, it fills a gap that no other technology addresses as effectively.

But that's not the problem most schools, hospitals, and campuses are trying to solve.

The facilities that suffer the most from weapon incidents are predominantly indoor, predominantly camera-equipped, and almost always looking for a way to intervene before a situation becomes unrecoverable. Acoustic detection doesn't serve that need. It was never designed to.

Video-based AI weapon detection operates at the only point on the threat timeline where prevention is still possible. The moment a weapon becomes visible on camera, the alert window opens. That's the difference between a security system that confirms what happened and one that gives you a chance to change what happens next.

Coram brings that capability into a single platform alongside video surveillance, access control, and emergency management. You don’t need a separate sensor network, third-party coordination, or alert that arrives after the fact. A unified system sees a threat coming and puts the right people in motion before it escalates.

FAQ

Video-based detection identifies a visible, brandished firearm before a shot is fired. Acoustic detection identifies the sound of a discharged weapon after the fact. The core difference is timing. For schools, hospitals, and campuses, that distinction determines whether your security posture is built around prevention or response.

Yes. AI weapon detection analyzes every frame of live video and identifies a visible firearm the moment it appears on camera. There’s no discharge is required. The system sends a notification to your security team within seconds, before any shot is fired.

Coram sends confirmed alerts within 5 seconds of detection, including the live camera feed, detection image, and location. Acoustic gunshot detection dispatches an alert within 60 seconds of a shot being fired, after a mandatory human review step. The more important difference isn't speed. It's that video-based alerts fire before a weapon is discharged at all.

In most cases, no. Systems like Coram work with existing IP cameras using standard protocols. Detection performance depends on camera placement and resolution rather than brand or age. Most facilities can deploy AI weapon detection without a significant hardware investment.

ShotSpotter typically costs $65,000 to $90,000 per square mile annually, a model designed for municipal law enforcement budgets. For individual facilities, the economics rarely work without grant funding. Video-based AI detection runs on a per-camera or per-site subscription, layered on infrastructure most facilities already own, making it substantially more accessible for schools, hospitals, and campuses.